Facial recognition and fundamental rights 101

This is the first post in a series about the fundamental rights impacts of facial recognition. Private companies and governments worldwide are already experimenting with facial recognition technology. Individuals, lawmakers, developers - and everyone in between - should be aware of the rise of facial recognition, and the risks it poses to rights to privacy, freedom, democracy and non-discrimination.

In November 2019, an online search on “facial recognition” churned up over 241 million hits – and the suggested results imply that many people are unsure about what facial recognition is and whether it is legal. Although the first uses that come to mind might be e-passport gates or phone apps, facial recognition has a much broader and more complex set of applications, and is becoming increasingly ubiquitous in both public and private spaces – which can impact a wide range of fundamental rights.

What the tech is facial recognition all about?

Biometrics is the process that makes data out of the human body – literally, your unique “bio”-logical qualities become “metrics.” Facial recognition is a type of biometric application which uses statistical analysis and algorithmic predictions to automatically measure and identify people’s faces in order to make an assessment or decision. Facial recognition can broadly be categorised in terms of the increasing complexity of the analytics used: from verifying a face (this person matches their passport photo); identifying a face (this person matches someone in our database), to classifying a face (this person is young). Not all uses of facial recognition are the same and, therefore, neither are the associated risks. Facial recognition can be done live (e.g. analysis of CCTV feeds to see if someone on the street matches a criminal in a police database) or non-live (e.g. matching two photos), which has a higher rate of accuracy.

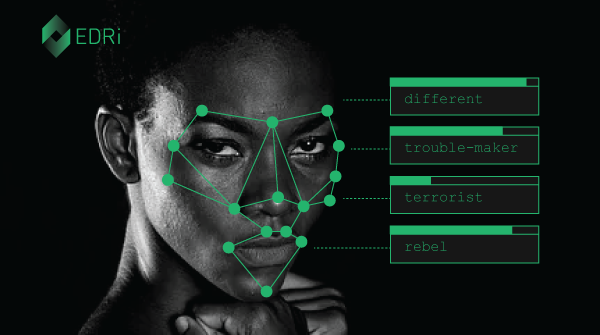

There are opportunities for error and inaccuracy in each category of facial recognition, with classification being the most controversial because it claims to judge a person’s gender, race, or other characteristics. This categorisation can lead to assessments or decisions that infringe on the dignity of gender non-conforming people, embed harmful gender or racial stereotypes, and lead to unfair and discriminatory outcomes.

Furthermore, facial recognition is not about facts. According to the European Union Agency for Fundamental Rights (FRA), “an algorithm never returns a definitive result, but only probabilities” – and the problem is exacerbated as the data on which that the probabilities are based reflects social biases. When these statistical likelihoods are interpreted as if they are a neutral certainty, this can threaten important rights to fair and due process. This in turn has an impact on individuals’ ability to seek justice when facial recognition infringes on their rights. Digital rights NGOs warn that facial recognition can harm privacy, security and access to services, especially for marginalised communities. A powerful example of this is when facial recognition is used in migration and asylum systems.

Support our work

Donate

A question of social justice and democracy

Whilst discrimination resulting from technical issues or biased data-sets is a genuine problem, accuracy is not the crux of why facial recognition is so concerning. A facial recognition system claiming to identify terrorists at an airport, for example, could be considered 99% accurate even if it did not correctly identify a single terrorist. And greater accuracy is not necessarily the answer either, as it can make it easier for police to target or profile people of colour based on damaging racialised stereotypes. The real heart of the problem lies in what facial recognition means for our societies, including how it amplifies existing inequalities and violations, and whether it fits with our conceptions of democracy, freedom, privacy, equality, and social good. Facial recognition by definition raises questions about the balance of personal data protection, mass surveillance, commercial interests and national security which societies should carefully consider. Technology is frequently incredible, impressive, and efficient – but this should not be confused with its use being necessary, beneficial, or useful for us as a society. Unfortunately, these important questions and key issues are often decided out of public sight, with little accountability and oversight.

What’s in a face?

Your face has a particular sensitivity in the context of surveillance, says France’s data protection watchdog – and as a very personal form of personal data, both live and non-live images of your face are already protected from unlawful processing under the General Data Protection Regulation (GDPR).

Unlike a password, your face is unique to you. Passwords can be kept out of sight and reset if needed – but your face cannot. If your eye is hacked, for example, there is no way to wipe the slate clean. And your face is also distinct from other forms of biometric data such as fingerprints because it is almost impossible to avoid being subject to facial surveillance when such technology is used in public places. Unlike having your fingerprints taken, your face can be surveilled and analysed without your knowledge. Your face can also be a marker of protected characteristics under international law such as your right to freely practice your religion. For these reasons, facial recognition is highly intrusive and can infringe on rights to privacy and personal data protection, among many other rights.

Researchers have highlighted the frightening assumptions underpinning much of the current hype about facial recognition, especially when used to categorise emotions or qualities based on individuals’ facial movements or dimensions. This harks back to the discredited pseudo-science of physiognomy – a favourite of Nazi eugenicists – and can have massive implications on individuals’ safety and dignity when used to make a judgement about things like their sexuality or whether they are telling the truth about their immigration status. Its use in recruitment also increases discrimination against people with disabilities. Experts warn that there is no scientific basis for these assertions – but that has not stopped tech companies churning out facial classification systems. When used in authoritarian societies or where being LGBTQI+ is a crime, this sort of mass surveillance threatens the lives of journalists, human rights defenders, and anyone that does not conform – which in turn threatens everyone’s freedom.

Why can’t we open the Black Box?

The statistical analysis underpinning facial recognition and other similar technology is often referred to as a “black box”. Sometimes this is because the technological complexity of deep learning systems means that even data scientists do not fully understand the way that the algorithmic models make decisions. Other times, this is because the private companies creating the systems use intellectual property or other commercial protections to hide their models. This means that individuals and even states are prevented from scrutinising the inner workings and decision-making processes of facial recognition tech, even though it impacts so many fundamental rights, which violates principles of transparency and informed consent.

Facial recognition and the rule of law

If this article has felt like a roll-call of human rights violations – that’s because it is. Mass surveillance through facial recognition technology threatens not just the right to privacy, but also democracy, freedom, and the opportunity to develop one’s self with dignity, autonomy and equality in society. It can have what is known as a “chilling effect” on legal dissent, stifling legitimate criticism, protest, journalism and activism by creating a culture of fear and surveillance in public spaces. Different uses of facial recognition will have different rights implications – depending not only on what and why they are analysing people’s faces, but also because of the justification for the analysis. This includes whether the system meets legal requirements for necessity and proportionality – which, as the next article in this series will explore, many current applications do not.

The rule of law is of vital importance across the European Union, applying to both national institutions and private companies – and facial recognition is no exception. The EU can contribute to protecting people from the threats of facial recognition by strongly enforcing GDPR and by considering how existing or future legislation may impact upon facial recognition too. The EU should foster debates with citizens and civil society to help illuminate important questions including the differences between state and private uses of facial recognition and the definition of public spaces, and undertake research to better understand the human rights implications of the wide variety of uses of this technology. Finally, prior to deploying facial recognition in public spaces, authorities need to produce human rights impact assessments and ensure that the use passes the necessity and proportionality test.

When it comes to facial recognition, just because we can use it does not necessarily mean that we should. But what if we continue to be seduced by the allure of facial recognition? Well, we must be prepared for the violations that arise, implement safeguards for protecting rights, and create meaningful avenues for redress.

Read the rest of the facial recognition and fundamental rights series

-

The many faces of facial recognition in the EU

In this second installment of EDRi's facial recognition and fundamental rights series, we look at how different EU Member States, institutions and other countries worldwide are responding to the use of this tech in public spaces.

Read more

-

Your face rings a bell: Three common uses of facial recognition

Not all applications of facial recognition are created equal. In this third installment, we sift through the hype to analyse three increasingly common uses of facial recognition: tagging pictures on Facebook, automated border control gates, and police surveillance.

Read more

-

Stalked by your digital doppelganger?

In this fourth installment of EDRi’s facial recognition and fundamental rights series, we explore what could happen if facial recognition collides with data-hungry business models and 24/7 surveillance.

Read more

-

Dangerous by design: A cautionary tale about facial recognition

In this fifth and final installment of EDRi's facial recognition and fundamental rights series, we consider an experience of harm caused by fundamentally violatory biometric surveillance technology.

Read more

-

Facial Recognition & Biometric Mass Surveillance: Document Pool

Despite evidence that public facial recognition and other forms of biometric mass surveillance infringe on a wide range EU fundamental rights, European authorities and companies are deploying these systems at a rapid rate. This has happened without proper consideration for how such practices invade people's privacy on an enormous scale; amplify existing inequalities; and undermine democracy, freedom and justice.

Read more

Facial recognition technology: fundamental rights considerations in the context of law enforcement (27.11.2019)

https://fra.europa.eu/sites/default/files/fra_uploads/fra-2019-facial-recognition-technology-focus-paper.pdf

Why ID (2019)

https://www.accessnow.org/whyid-letter/

Ban Face Surveillance (2019)

https://epic.org/banfacesurveillance/

Bots at the Gate: A Human Rights Analysis of Automated Decision-Making in Canada’s Immigration and Refugee System (16.08.2018)

https://ihrp.law.utoronto.ca/sites/default/files/media/IHRP-Automated-Systems-Report-Web.pdf

Declaration: A Moratorium on Facial Recognition Technology for Mass Surveillance Endorsements

https://thepublicvoice.org/ban-facial-recognition/endorsement/

The surveillance industry is assisting state suppression. It must be stopped (26.11.2019)

https://www.theguardian.com/commentisfree/2019/nov/26/surveillance-industry-suppression-spyware

Contribution by Ella Jakubowska, EDRi intern [at time of writing, now Policy and Campaigns Officer], with many ideas gratefully received from or inspired by members of the EDRi network