Algorithms of trauma: New case study shows that Facebook doesn’t give users real control over disturbing surveillance ads

A case study examined by Panoptykon Foundation, EDRi’s member in Poland, and showcased by the Financial Times, demonstrates how Facebook uses algorithms to deliver personalised ads that may exploit users’ mental vulnerabilities.

A case study examined by Panoptykon Foundation, EDRi’s member in Poland, and showcased by the Financial Times, demonstrates how Facebook uses algorithms to deliver personalised ads that may exploit users’ mental vulnerabilities. The experiment shows that users are unable to get rid of disturbing content: disabling sensitive interests in ad settings limits targeting options for advertisers, but does not affect Facebook’s own profiling and ad delivery practices. While much has been written about the disinformation and risks to democracy generated by social media’s data-hungry algorithms, the threat to people’s mental health has not yet received enough attention.

Disturbing ads pushed at a vulnerable user

Panoptykon, supported by Piotr Sapieżyński, a research scientist at Northeastern University, investigated a newsfeed of Joanna, a young woman, mother of a toddler, who complained she had been exposed to content with a very specific pattern on Facebook. Examples include, health-related ads, often with an emphasis on cancer, genetic disorders or other serious adult and children conditions such as crowdfunding campaigns for children or young adults suffering from these diseases. Health has been a sensitive subject for Joanna, especially since one of her parents died of cancer but also since she became a mother herself. The disturbing content that Facebook pushed to her fuels her anxiety and is an unwelcome reminder of the trauma she has experienced.

The analysis: prevalence of health-related ads confirmed

Panoptykon analysed over 2000 ads in Joanna’s newsfeed over the period of 2 months. It turned out that approximately one in five of the ads presented to her was related to health, including a significant portion of such ads featuring terminally ill children or references to fertility problems. They also found 21 health-related tags among the “interests” which Facebook assigned to her in order to personalise content, including: “oncology”, “cancer awareness”, “genetic disorder”, “neoplasm” and “spinal muscular atrophy” – all inferred by the platform based on – most likely1 – the user’s online activity on and off Facebook. This confirms our concern that Facebook allows advertisers to exploit inferred traits, sometimes of a highly sensitive nature, which users have not willingly disclosed.

Facebook’s ad control tools don’t work

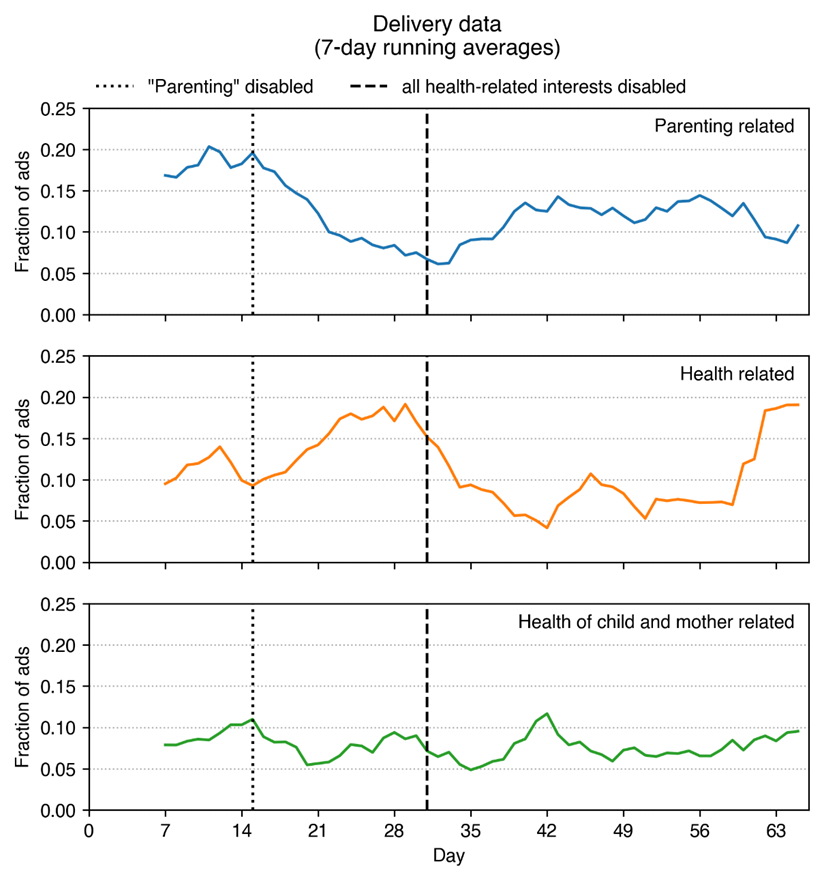

There is more bad news. The results of Panoptykon’s case study suggest that although Facebook has made some ad control tools available, users have no real possibility to influence how algorithms controlled by the platform shape their exposure to sponsored content. Panoptykon tested whether adjusting the controls offered by the platform (such as disabling health-related interests) would allow Joanna to eliminate the disturbing content from her newsfeed. Unfortunately, the user’s experience hardly improved when she changed her settings. The number of disturbing ads was changing during the experiment but after 2 months it returned to nearly the original level.

When the user requested to “See fewer ads about Parenting”, the prevalence of these ads dropped initially, but grew back over time, and even new interest categories appeared for targeting, namely “Parenting” and “Childcare”. Disabling health related interests did remove the ads targeted using the removed interests, but new categories were inferred, such as “Intensive Care Unit”, “Preventative Healthcare”, and “Magnetic Resonance Imaging”. None of these changes appears to have influenced the prevalence of the most problematic ads, about prenatal and infant health.

The EU Digital Services Act: a once-in-a-decade opportunity to fix these problems

Large online platforms have become key channels through which people access information and experience the world. But the content they see is filtered through the lens of algorithms driven by commercial logic that maximises engagement in order to generate even more data about the user for the purposes of surveillance advertising. This automated fixation on campaign targets is indifferent to ‘collateral damage’: amplification of hate or disinformation, or – as this case study shows – reinforcement of trauma and anxiety.

Panoptykon’s case study shows that social media users are helpless against platforms that exploit their vulnerabilities for profit, but it is not too late to fix this. The EU Digital Services Act, currently being negotiated in the European Parliament and Council, can be a powerful tool in protecting social media users by default and empowering them to exercise real control over their data and the information they see.

The DSA can and should restrict the possibility of online platforms to use algorithmic predictions for advertising and content recommendations. To avoid manipulatory design, nudging users to give their consent to these harmful practices, and ineffective control tools, users should be able to use interfaces independent from the platform, including alternative content personalisation systems built on top of the existing platform by commercial or non-commercial actors whose services better align with users’ interests.

In September, 50 civil society organisations from all around Europe sent an open letter to the members of the European Parliament’s internal market and consumer protection committee, urging them to implement these solutions in their position on the Digital Services Act.

(Contribution by: Anna Obem, Managing Director, & Karolina Iwańska, Lawyer and Policy Analyst, EDRi member Panoptykon Foundation)

1 To better understand why the user has been exposed to this particular type of content, she submitted a GDPR subject access request to Facebook, asking about specific personal data used for delivering particular ads to her, as well as for inferring health-related interests. So far she has not received a meaningful answer.