artificial intelligence

Filter by...

-

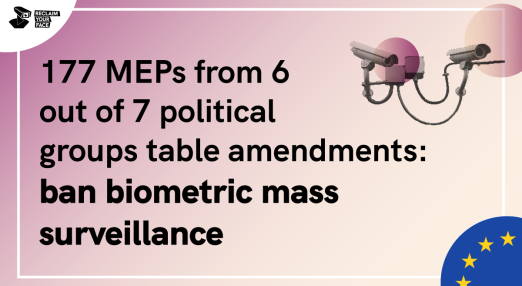

European Parliament calls loud and clear for a ban on biometric mass surveillance in AI Act

After our timely advocacy actions with over 70 organisations, the amendments to the IMCO - LIBE Committee Report for the Artificial Intelligence Act clearly state the need for a ban on Remote Biometric Identification. In fact, 24 individual MEPs representing 158 MEPs, demand a complete ban on biometric mass surveillance practices. Now we need to keep up the pressure at European and national levels to ensure that when the AI Act is officially passed, likely in 2023 or 2024, it bans biometric mass surveillance.

Read more

-

The AI Act: EU’s chance to regulate harmful border technologies

The AI Act will be the first regional mechanism of its kind in the world, but it needs a serious update to meaningfully address the profileration of harmful technologies tested and deployed at Europe’s borders.

Read more

-

Regulating Migration Tech: How the EU’s AI Act can better protect people on the move

As the European Union amends the Artificial Intelligence Act (AI Act) exploring the impact of AI systems on marginalised communities is vital. AI systems are increasingly developed, tested and deployed to judge and control migrants and people on the move in harmful ways. How can the AI Act prevent this?

Read more

-

Civil society reacts to European Parliament AI Act draft Report

This joint statement evaluates how far the IMCO-LIBE draft Report on the EU’s Artificial Intelligence (AI) Act, released 20th April 2022, addresses civil society's recommendations. We call on Members of the European Parliament to support amendments that centre people affected by AI systems, prevent harm in the use of AI systems, and offer comprehensive protection for fundamental rights in the AI Act.

Read more

-

The EU’s Artificial Intelligence Act: Civil society amendments

Artificial Intelligence (AI) systems are increasingly used in all areas of public life. It is vital that the AI Act addresses the structural, societal, political and economic impacts of the use of AI, is future-proof, and prioritises affected people, the protection of fundamental rights and democratic values. The following issue papers detail the amendments of civil society following the Civil Society Statement on the AI Act, released in November 2021.

Read more

-

The European Parliament must go further to empower people in the AI act

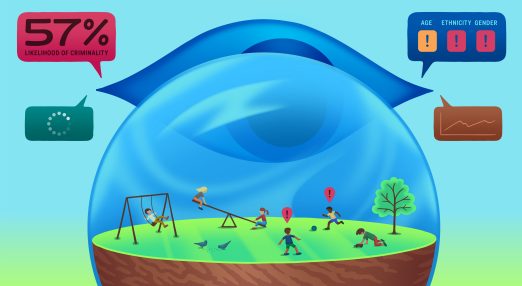

Today, 21 April, POLITICO Europe published a leak of the much-anticipated draft report on the Artificial Intelligence (AI) Act proposal. The draft report has taken important steps towards a more people-focused approach, but it has failed to introduce crucial red lines and safeguards on the uses of AI, including ‘place-based’ predictive policing systems, remote biometric identification, emotion recognition, discriminatory or manipulative biometric categorisation, and uses of AI undermining the right to asylum.

Read more

-

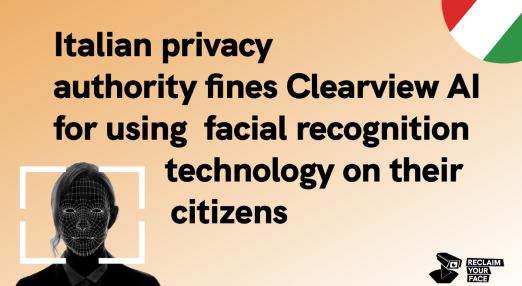

Italian DPA fines Clearview AI for illegally monitoring and processing biometric data of Italian citizens

On 9 March 2022, the Italian Data Protection Authority fined the US-based facial recognition company Clearview AI EUR 20 million after finding that the company monitored and processed biometric data of individuals on Italian territory without a legal basis. The fine is the highest expected according to the General Data Protection Regulation, and it was motivated by a complaint sent by the Hermes Centre in May 2021 in a joint action with EDRi members Privacy International, noyb, and Homo Digitalis—in addition to complaints sent by some individuals and to a series of investigations launched in the wake of the 2020 revelations of Clearview AI business practices.

Read more

-

The European Commission does not sufficiently understand the need for better AI law

The Dutch Senate shares the concerns Bits of Freedom has about the Artificial Intelligence Act and wrote a letter to the European Commission about the need to better protect people from harmful uses of AI such as through biometric surveillance. The Commission has given a response to this which is not exactly reassuring.

Read more

-

Civil society calls on the EU to ban predictive AI systems in policing and criminal justice in the AI Act

40+ civil society organisations, led by Fair Trials and European Digital Rights (EDRi) are calling on the EU to ban predictive systems in policing and criminal justice in the Artificial Intelligence Act (AIA).

Read more

-

2021: Looking back at digital rights in the year of resilience

We started 2021, hoping to leave the tremendously challenging year of 2020 behind. The Covid-19 pandemic has had a devastating impact on our societies, causing unprecedented harm to people and economies. If 2020 was the year of the pandemic shock, 2021 was the year of resilience. We had to learn to live in a constant uncertainty of what it would take to keep defending human rights: Could we work and walk down the streets without being constantly surveilled? Would efforts to tackle disinformation distort legitimate content, or would they bring down Big Tech instead? Will 2022 be 2021 2.0?

Read more

-

The ICO provisionally issues £17 million fine against facial recognition company Clearview AI

Following EDRi member Privacy International's (PI) submissions before the UK Information Commissioner's Office (ICO), as well as other European regulators, the ICO has announced its provisional intent to fine Clearview AI.

Read more

-

Civil society calls on the EU to put fundamental rights first in the AI Act

Today, 30 November 2021, European Digital Rights (EDRi) and 119 civil society organisations launched a collective statement to call for an Artificial Intelligence Act (AIA) which foregrounds fundamental rights.

Read more