The art of dodging questions – Facebook’s privacy policies

Remember in April 2018, after the Cambridge Analytica scandal broke, we sent a series of 13 questions to Facebook about their users’ data exploitation policy. Months later, Facebook got back to us with answers. Here is a critical analysis of their response.

Recognising people’s face without biometric data?

The first questions (1a and 1b) related to Facebook’s new facial recognition feature which scans every image uploaded to search for faces and compare them to those already in their database in order to identify users. Facebook claims that the identification process only works for users that explicitly consented to have the feature enabled and that the initial detection stage, during which the photograph is being analysed, does not involve the processing of biometric data. Biometric data is data used to identify a person through unique characteristics like fingerprints or facial features.

There are two issues here. First, contrary to what Facebook declared, the first batch of users for whom face recognition was activated received a notice, but were not asked for consent. All users were opted in by default, and only a visit to the settings page allowed them to say “no”. For the second batch of users, Facebook apparently decided to automatically opt-in only those accounts that had the photo tag suggestion feature activated, simply assuming that they wanted face recognition, too. Obviously, this does not constitute explicit consent under the General Data Protection Regulation (GDPR).

Second, even if Facebook does not manage to identify users who disabled the feature or people who are not users, their photos might still be uploaded and their faces scanned. No technology can determine whether an image contains only users who gave consent, without actually scanning every uploaded photo to search for facial features.

Facebook has been presenting this new feature as an empowerment tool for users to control which pictures of them are being uploaded on the platform, to protect privacy and to prevent identity theft. However, EU officials and digital rights advocates denounced this communication practice as manipulating user consent by promoting facial recognition as an identity protection tool.

Privacy settings by default

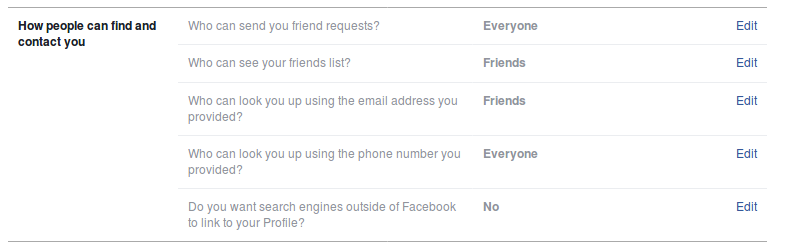

One of our questions related to the initial settings every Facebook user has when creating an account and their protection levels by default (question 3). Facebook responded that it has suspended the search for people by phone number in the Facebook search bar. Since Facebook responded to our questions in August 2018, it seems that it reinstated this function, set on “Everyone can look you up using your phone number” by default (see below Belgian account settings consulted lastly on 24 January 2019).

This reinstatement is probably linked to the upcoming merging between Facebook-owned messaging systems: Facebook Messenger, WhatsApp and Instagram messaging. Identification requirements for each messaging applications are different: a Facebook account for Messenger, a phone number for WhatsApp and an email for Instagram. The merging gives Facebook the possibility to intersect information and to connect several profiles under a single, unified identity. What is worse, Facebook now reportedly makes searchable phone numbers that users had provided for two-factor authentication, and there is no way to switch this feature off.

Other default privacy settings on Facebook are not protective either. The access to a user’s friend list is set to “publicly visible”, for example. Facebook justified the low privacy level by repeating that users join Facebook to connect with others. Nonetheless, even if users want to limit who can see their friend lists, people can see their Facebook friendships by looking at the publicly accessible friends lists of their friends. Some personal information will simply never be fully private under Facebook’s current privacy policies.

The Cambridge Analytica case

Facebook pleaded the misuse of its services and shifted the entire responsibility of the Cambridge Analytica scandal on the quiz application “This Is Your Digital Life” (our questions 4 and 5). The app requested permission from users to access their personal messages and newsfeed. According to Facebook, there was no unauthorised access to data as the consent was freely given by users. However, accessing one user’s newsfeed and personal messages also meant that the application could access received posts and messages, that is to say from users who did not consent. Once again, individual privacy is highly dependent on others’ carefulness. Facebook admitted that it wished it had notified earlier affected users who did not give consent. To our question why the appropriate national authorities were not notified of the incident immediately, Facebook gave no answer.

“This Is Your Digital Life” is just one application, but there may be many more that harvest similar amounts of personal data without the consent from users. Facebook assured that it made it harder for third parties to misuse its systems. Nevertheless, the limits to the processing of collected data by third parties remain unclear, and we received no answer about the current possibilities for other applications to share and receive users messages.

Facebook’s ad targeting practices

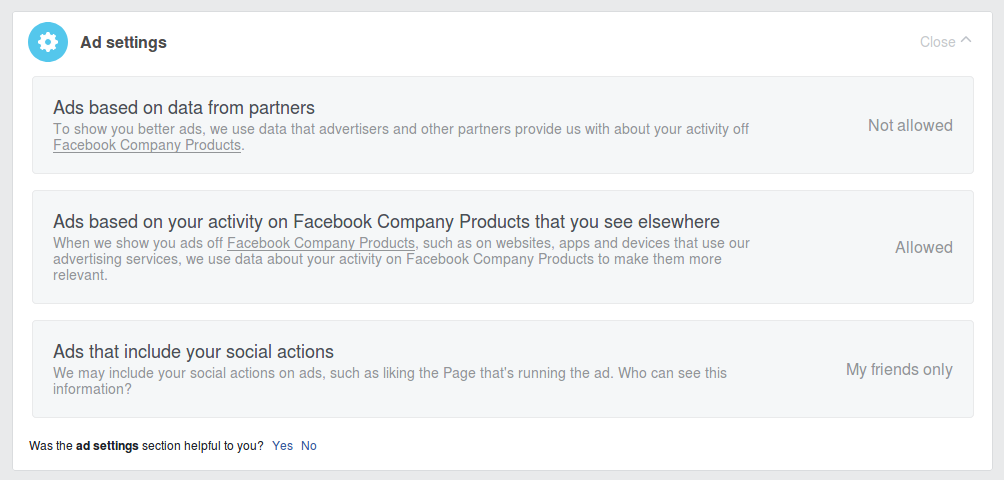

“Advertising is central not only to our ability to operate Facebook, but to the core service that we provide, so we do not offer the ability to disable advertising altogether.” If advertisement is non-negotiable (our question 9), Facebook explained that through its new Ad Preferences tool (our question 6) users can nevertheless decide whether or not they want to see ads that are targeted at them based on their interests and personal data. The Ad Preferences tool gives users control over the criteria used for targeted advertisement: data provided by the user, data collected from Facebook partners, and data based on the user’s activity on Facebook products. Users can also hide advertisement topics and disable advertisers with whom they interacted.

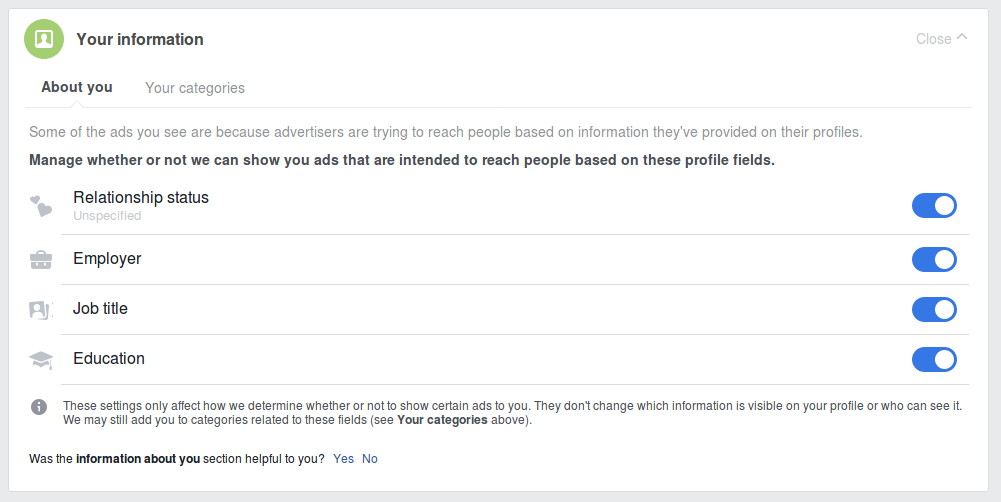

But if Facebook was treating ads settings the same way as privacy settings, as it claims to do, the default settings for a new user would look very different: For this article we created a new Facebook account and found that Facebook does not guide new users through the opt-in and opt-out options for privacy and ad settings. On the contrary, Facebook’s default ad settings involve the profiling of new users based on their relationship status, job title, employer and education (see new account settings below). Those defaults are clearly incompatible with the GDPR’s “privacy by default” requirement.

Ads are also based on the activity on Facebook products, present on “websites, apps and devices that use [Facebook’s] advertising services”. This includes everything from social media plugins such as “Like” or “Share” buttons to Facebook Messenger, Instagram or even Whatsapp, which has stand-alone terms of service and privacy policy. If a third party website uses Facebook Analytics, traces left by the user on that third-party website will be used as well. Since Facebook is acquiring more and more applications, the list goes on and on. “Data from different apps can paint a fine-grained and intimate picture of people’s activities, interests, behaviours and routines, some of which can reveal special category data, including information about people’s health or religion.”

In the same vein, EDRi member Privacy International found that Facebook collects personal information on people who are logged out of Facebook or don’t even have a Facebook account. The social media company owns so many apps, “business tools” and services that it is capable of tracking users, non-users and logged-out users across the internet. Facebook doesn’t seem to be willing to change its business practices to respect people’s privacy. Privacy is not about what Facebook users can see from each other but what information is accessed and used by third parties and for which purposes without the users’ knowledge or consent.

Profiling and automated decision-making

Article 22 of the GDPR introduces a right not to be subject to a decision based solely on automated processing, including profiling, which produces legal or “similarly significant” effects for the user. We asked Facebook what measures it takes to make sure its ad targeting practices, notably for political ads, are compliant with this provision (question 7). In its answer, Facebook considers that its targeted ads based on automated decision-making do not have legal or similarly significant effects yet. In light of the numerous scandals the company has been facing around the manipulation of the 2016 U.S. elections and the Brexit referendum, this answer is quite surprising. Even though many would argue that the way Facebook targets voters with ads based on automated decision-making has indeed “similarly significant”, if not legal effects for its users and societies as a whole. But Unfortunately, Facebook doesn’t seem to consider it should change its ad targeting practices.

Special categories of data

Article 9 of the GDPR defines special categories of particularly sensitive data that include racial or ethnic origin, political opinions, religious beliefs, health, sexual orientation and biometric data. Facebook says that without the user’s explicit consent to use such special categories of data, they will be deleted from respective profiles and Facebook’s servers (our question 2.a).

What Facebook doesn’t say, is that users don’t even need to share this information in order for the platform to monetise it. Facebook can simply deduce religious views, political opinions and health data from based on which third-party websites they visit, what they write in Facebook posts, what they comment on and share: Facebook does not need users to fill in their profile fields when it can infer extremely sensitive information from all other data users generate on the platform day in day out. Facebook can then assign different ad preferences (such as “LGBT community”, “Socialist Party”, “Eastern Orthodox Church”) based on each user’s online activities, without asking for consent at all, and exploit it for advertising purposes. Researchers argue the practice of labelling Facebook users with ad preferences associated with special categories of personal data may be in breach of Article 9 of the GDPR because no other legal basis than explicit consent could allow this form of use. In its reply to our questions, Facebook voluntarily omitted their use of sensitive data derived from user behaviour, posts, comments, likes and so on to feed its marketing profiles. It is too easy to focus on the tip of the iceberg.

Right to access

Replying to our request on the right to access, download, erase or modify personal data, Facebook described its three main tools, Download Your Information (DYI), Access Your Data (AYD) and Clear History (our question 8). According to Facebook, DYI provides the user with all the data each user provided on the platform. But as explained above, this does not include information inferred by the platform based on user behaviour, posts, comments, likes and so on, nor information provided by friends or other users, such as tags in photos or posts.

Lastly, Facebook confirmed that it was not using smartphone microphones to inform ads (our question 12). This might even be true, because Facebook has already a lot of surveillance tools at hand to gather enough information about users to produce disconcerting advertisements.

Questions left without answers

- What was the cut-off date before Facebook started deleting information users added to their profile and did not give explicit consent for their processing?

- Will Facebook offer a single place where people who have no Facebook account can control every privacy aspect of Facebook?

- If Facebook apps were to use smartphone microphones in any way, would you consider that lawful?

- You claim to offer a way for users to download their data with one click. Can you confirm that the downloaded files contain all the data that Facebook holds on each user?

Written Responses to EDRi Questions (22.06.2018)

https://edri.org/files/edri_responses_facebook_20180622.pdf

Privacy International’s study on ‘How Apps on Android Share Data with Facebook – Report’ (29.12.2018) https://privacyinternational.org/report/2647/how-apps-android-share-data-facebook-report

Facebook Use of Sensitive Data for Advertising in Europe

https://arxiv.org/pdf/1802.05030.pdf

Facebook Doesn’t Need To Listen Through Your Microphone To Serve You Creepy Ads (13.04.2018)

https://www.eff.org/fr/deeplinks/2018/04/facebook-doesnt-need-listen-through-your-microphone-serve-you-creepy-ads

(Contribution by Chloé Berthélémy, EDRi)